Digital citizen empowerment a sytematic literature review fusionado.pdf

Vista previa de texto

JeDEM 6(1): 69-79, 2014

73

The attribute mapping step incorporates disambiguation of the attributes proceeds through

linking them to the right concepts in the knowledge base (KB). We identify two kinds of mapping,

direct mapping and indirect mapping. Finding the name of the attribute attached to the intended

concept in the KB and linking to it is called direct mapping. Not always a direct mapping is present

for an attribute. Finding the right sense of a term through its synonyms is called indirect mapping.

Note that synonyms can be suggested by user or retrieved from a KB.

The data modelling step leads to understanding an entity type from the attributes extracted in the

previous step and finding it (if exists) or a suitable parent (if created newly) in the already existing

entity type lattice, a lightweight ontology (see (Giunchiglia, 2009)) formed with the concepts of the

entity types. In the entity type development step, we produce a specification of an entity type

defining all possible attributes, their data types (e.g. string, float) and meta attributes such as

permanence (e.g. temporary, permanent), presence (e.g. mandatory, optional) and category (e.g.

temporal, physical).

While producing entities of a given entity type, mandatory and optional attributes are filled in with

data, which are semantified and disambiguated wherever applicable. In fact data for an entity can

come from multiple resources. Through semantification, we facilitate the integration of loosely

coupled data. In the case of unavailability of a mandatory attribute in the possible resources, we

signal it to the data provider as pro-sumers, see (Charalabidis, 2014) and do not allow the creation

of the corresponding entity unless all necessary data are present. This is how we can improve the

vertical completeness.

4. Open Data Rise

10

Open Data Rise (ODR) is an open source web application for data curation of OGD. It allows to

easily fix errors in source data and also to enrich names by linking them to their precise meaning in

a process called semantification. The framework employs the entity-centric model (Giunchiglia,

11

2012) and extends OpenRefine , an open source tool for cleansing messy data. The

semantification pipeline implemented in ODR is the result of the collaborative effort research work

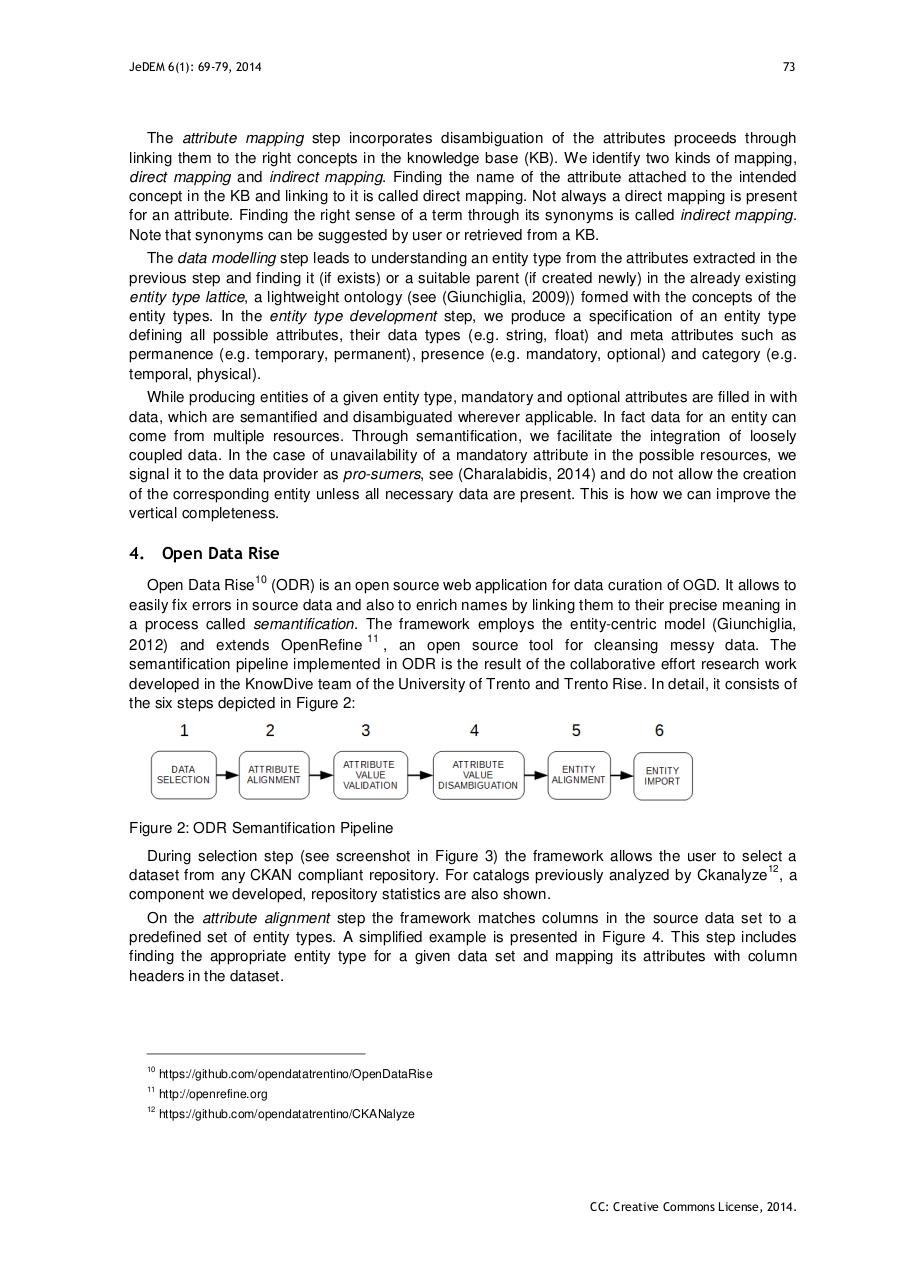

developed in the KnowDive team of the University of Trento and Trento Rise. In detail, it consists of

the six steps depicted in Figure 2:

Figure 2: ODR Semantification Pipeline

During selection step (see screenshot in Figure 3) the framework allows the user to select a

12

dataset from any CKAN compliant repository. For catalogs previously analyzed by Ckanalyze , a

component we developed, repository statistics are also shown.

On the attribute alignment step the framework matches columns in the source data set to a

predefined set of entity types. A simplified example is presented in Figure 4. This step includes

finding the appropriate entity type for a given data set and mapping its attributes with column

headers in the dataset.

10

https://github.com/opendatatrentino/OpenDataRise

11

http://openrefine.org

12

https://github.com/opendatatrentino/CKANalyze

CC: Creative Commons License, 2014.